NVIDIA’s Autonomous Ecosystem: Redefining the Future Role of Maps

“There’s a double-parked vehicle in my lane. I’m going around it.”

“Hey, Mercedes. Can we speed up?” “Sure, I’ll speed up.”

This is an actual dialogue between a driver and the Mercedes-Benz autonomous driving AI, showcased during the NVIDIA GTC keynote in March 2026. This brief interaction symbolizes the autonomous driving industry’s transition into the era of Physical AI, moving beyond simple object recognition via cameras and sensors toward embedded reasoning. NVIDIA CEO Jensen Huang remarked, “The ChatGPT moment of self-driving cars has arrived.” Vehicles have reached a stage where they perceive, reason, and judge road conditions, explain the logic behind their actions, and immediately reflect human verbal instructions into their driving policy.

Alpamayo, A Portfolio for Reasoning-based Autonomous Driving

At CES in January 2026, NVIDIA announced Alpamayo, an integrated and open ecosystem for next-generation autonomous driving. Alpamayo serves as the foundational model for NVIDIA’s full-stack autonomous driving platform. It is compatible with DRIVE Hyperion (hardware), Halos OS (operating system and platform software), DRIVE AV (application), and Omniverse Cosmos and NuRec, which serve as the infrastructure for all processes.

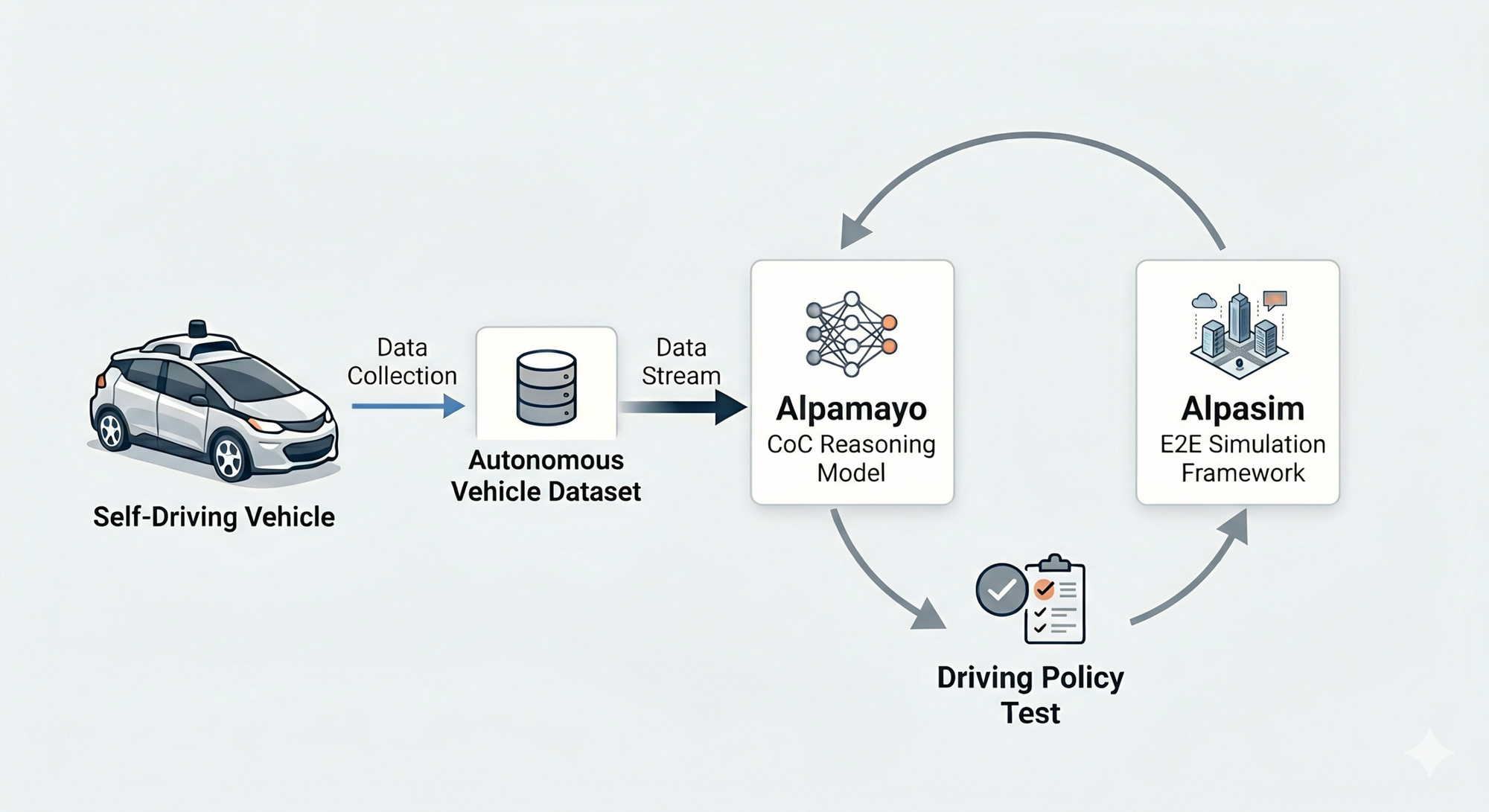

Alpamayo is a framework built on three pillars: the Reasoning VLA (Vision-Language-Action) Model, the AlpaSim simulator, and the Physical AI Open Dataset.1 NVIDIA Announces Alpamayo Family of Open-Source AI Models and Tools to Accelerate Safe, Reasoning-Based Autonomous Vehicle Development (https://nvidianews.nvidia.com/news/alpamayo-autonomous-vehicle-development).

1. Reasoning VLA (Vision-Language-Action) Model

The VLA model integrates visual perception (Vision), language understanding (Language), and behavior/decision-making (Action) within a Transformer architecture. Legacy systems used a separated structure for perception and judgement modules, resulting in a primitive learning method that simply mapped visual inputs to actions. In contrast, VLA utilizes a “Chain-of-Thought (CoT)” technique. It converts visual data from sensors like cameras and LiDAR into linguistic concepts, providing them to specific applications, and generates decisions – such as situational awareness or driving maneuvers – through reasoning. This process, built on a data flywheel structure based on NVIDIA Cosmos Reason, continuously refines the reasoning model using real-world data collected from vehicles and robots.

However, applying the standard CoT structure directly to autonomous driving scenarios presents challenges. Correlations between specific events and their outcomes may not be clearly learned, and actions can become ambiguous during “gray area” events, such as a pedestrian standing in an uncertain location. Furthermore, the AI might over-consider peripheral objects that have no direct impact on driving, such as light poles.

To address these issues, Alpamayo introduced the “Chain-of-Causation (CoC)” methodology, which leverages the CoT structure while significantly strengthening Causal Grounding. Rather than simple one-to-one learning between events and actions, this method explicitly defines the decisive events that lead to an action and explains the logical basis. NVIDIA states this has substantially improved the accuracy of auto-labeling.2 From Research to Production: How Alpamayo Accelerates Autonomous Vehicle Development (https://www.nvidia.com/en-us/on-demand/session/gtc26-s81779/). Alpamayo, to date, has been trained on approximately 80,000 hours of driving data, 10 billion parameters, and 0.7 million CoC segments.3NVIDIA GTC Automotive Special Address (https://www.youtube.com/watch?v=7N38fD4ksnI).

2. AlpaSim Simulation Tool

AlpaSim is a Python-based open simulation platform that allows for testing autonomous driving within a closed loop by simulating realistic sensor data, vehicle dynamics, and traffic scenarios. It is used for testing new algorithms, identifying vehicle behavior in edge cases or harsh environments, and debugging complex autonomous maneuvers.4AlpaSim GitHub (https://github.com/NVlabs/alpasim). According to the recent GTC announcement, 2 million autonomous driving simulations are executed daily on the Omniverse platform, which serves as the foundation for AlpaSim.

3. Physical AI Open Data

In the Alpamayo flywheel, real-world driving data serves as the fuel for the reasoning VLA. NVIDIA has open-sourced a total of 1,700 hours of driving data collected from over 2,500 cities across 25 countries via Hugging Face. The data was captured using multi-camera setups, LiDAR, and radar, encompassing various traffic conditions, weather, obstacles, and pedestrian environments.5NVIDIA Autonomous Vehicle Dataset – Hugging Face (https://huggingface.co/datasets/nvidia/PhysicalAI-Autonomous-Vehicles).

Alpamayo 1.5 Update

The Alpamayo 1.5 version, unveiled at NVIDIA GTC 2026, adopts the latest Cosmos-Reason2 VLM, enabling it to handle more complex logical structures than previous models. With integrated navigation guidance, users can command route or lane changes using natural language, which the AI then reflects in its driving through destination data and relational reasoning. Additionally, Reinforcement Learning Post-training (RL post-training) has improved the quality of reasoning and the consistency between reasoning and action. Flexibility regarding the number of cameras and parameters has also been expanded.6Expanding the Alpamayo Open Platform for Developing Reasoning AVs Across Models, Data, and Simulation (https://huggingface.co/blog/drmapavone/nvidia-alpamayo-1-5).

Alpamayo Deployment in Real-world Production

NVIDIA is simultaneously targeting the passenger vehicle and shared mobility markets with the AlpaMayo platform. The new Mercedes-Benz CLA is the first to feature the Alpamayo reasoning engine and the full-stack DRIVE AV software. It is scheduled for release in the U.S. market in Q1 2026, followed by Europe in Q2 and Asia in the second half of the year.7Nvidia to launch its AI-based autonomous technology in 2026 (https://www.just-auto.com/news/nvidia-to-launch-its-ai-based-autonomous-technology-in-2026/).

Uber Robotaxi Integration

Through a recent partnership with Uber, NVIDIA is actively applying Alpamayo to robotaxi commercialization. The two companies plan to expand autonomous robotaxi services to 28 cities worldwide by 2028, starting with Los Angeles and the San Francisco Bay Area in the first half of 2027. The roadmap involves using data collection vehicles to train the Alpamayo engine for city-specific environments, followed by pilot runs with safety operators, and eventually transitioning to Level 4 full autonomy.

Collaboration with OEM Ecosystem

NVIDIA aims to apply Level 4 autonomous driving to mass-produced vehicles by 2028. This involves integrating DRIVE Hyperion hardware into vehicles from partners such as BYD, Geely, Nissan, and Hyundai. If the Uber robotaxi partnership—which utilizes NVIDIA’s full stack including AI models, OS, and applications—proves successful, there is a high probability that this ecosystem will expand further across global OEMs.

Does a Role for Maps Still Exist in Autonomous Driving?

A previous A Piece of Map Insight report (October 2025) identified five roles for maps that would persist even in the era of end-to-end autonomous driving. Given the technological advancements of recent months and NVIDIA’s ecosystem, those roles are expected to evolve as follows:

1. High-Precision Localization

Traditionally, the core role of HD maps was to match centimeter-level map data with real-time sensor values to pinpoint a vehicle’s lane and coordinates. However, architectures like the Alpamayo VLA model interpret road structures and surroundings instantly based on real-time sensor data. If all real-world sensor data is converted into simulation states for learning and verification, the map’s role as a localization aid may significantly diminish.

2. Safe Path Planning

Conventional navigation provides road network information and rules, pre-determining a route and informing the driver. However, Alpamayo 1.5 treats navigation guidance as an input for the reasoning engine, not the final conclusion. The AI logically decides whether to turn at an intersection in real-time. Consequently, the map’s role may be limited to providing destination information and basic road topology.

3. GNSS Interruption (Urban Canyons and Tunnels)

When GPS signals are lost in tunnels or urban canyons, vehicles rely on pre-stored maps. This role will likely remain for a long time. Even advanced reasoning AI can suffer from hallucinations due to sensor contamination or signal loss. In such cases, maps act as a memory of the physical world, providing e-horizon functions to inform the AI of what the sensors cannot see, thereby preventing blind driving.

4. Redundancy and Safety Assurance

Maps have served as a database of real-world hazards and traffic laws—essentially a digital notice board for driving. While simulations can generate infinite edge cases, maps will continue to internalize social rules and physical constraints into the AI. However, the delivery format may shift from coordinate-based maps to “data factories” linked with imagery.

5. Simulation

The era of manually creating 3D environments (buildings, trees, etc.) and layering them onto 2D maps is ending. Point cloud collection and real-world imagery now serve as direct sources for simulation. As the speed and technology for replicating reality improve, maps requiring human manual labor will lose their relevance as an intermediate step.

Roles Becoming More Critical

While the role of the traditional coordinate-based database is shrinking, the map as a “memory of reality” and a “data factory for rules” remains essential. Two key trends emerge at this turning point:

The Subject of Data Conflation

The core competitiveness of Physical AI lies in securing dense, wide-ranging real-world data. If an OEM updates maps using only its own vehicle data, reasoning in low-density areas will remain superficial. Global companies like Mercedes, Hyundai, and BYD are joining NVIDIA’s ecosystem primarily to access the collective intelligence formed by merging fragmented data.

The competition to become the Conflator (the entity that integrates this data) will intensify. While NVIDIA seeks to manage this cycle through its hardware and software stack, traditional map providers like TomTom and HERE Technologies are also vying for this role, though securing the volume of high-quality data that NVIDIA possesses remains a significant challenge.

Emerging Core Competencies

Since simulations cannot perfectly reflect 100% of reality, two types of expertise will become increasingly valuable:

Local Data Source Experts

It is economically and physically impossible to virtualize every local accident, road closure, or new traffic regulation globally in real-time from across the world. Local experts who can accurately supply a data factory with the nuances of a specific country’s traffic culture, rules, and micro-changes in road conditions will see their value recognized again.

Simulation Architects

The process from source data to a finished simulation environment cannot be fully automated. The ability to understand physical laws while simultaneously transforming road data into simulation assets and controlling those environments will be a core role in the reasoning-based autonomous driving ecosystem.